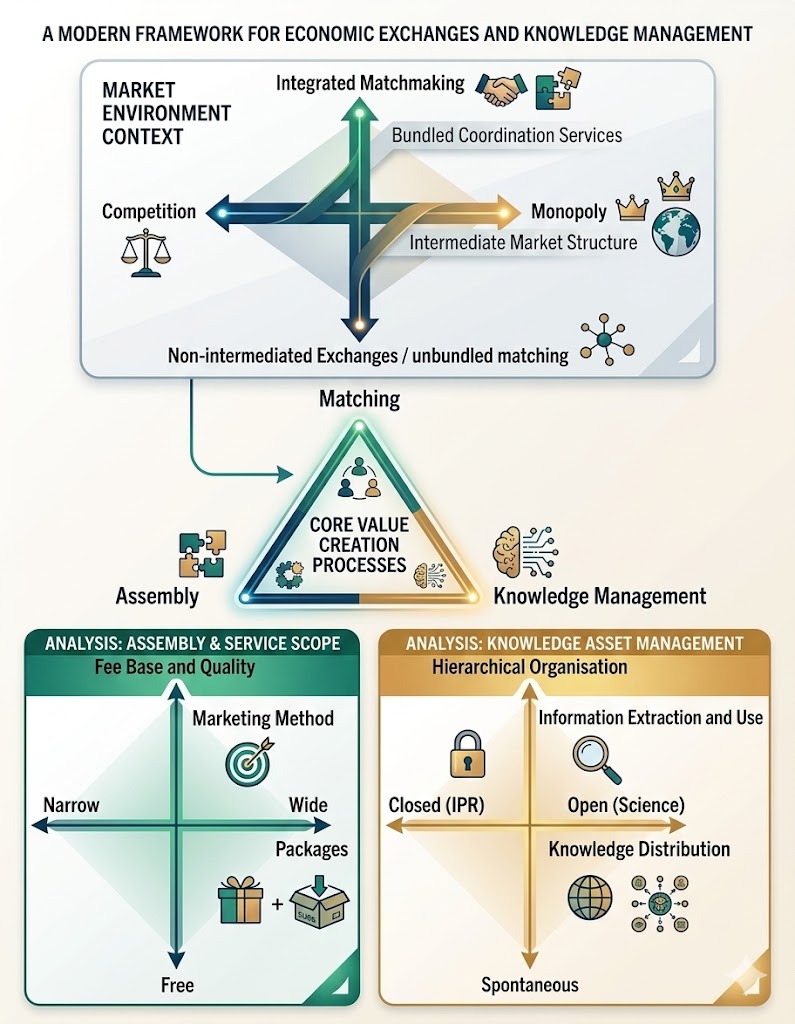

First Observation: AI as a Search Engine

Matching intent to information.

The Search Engine Benchmark

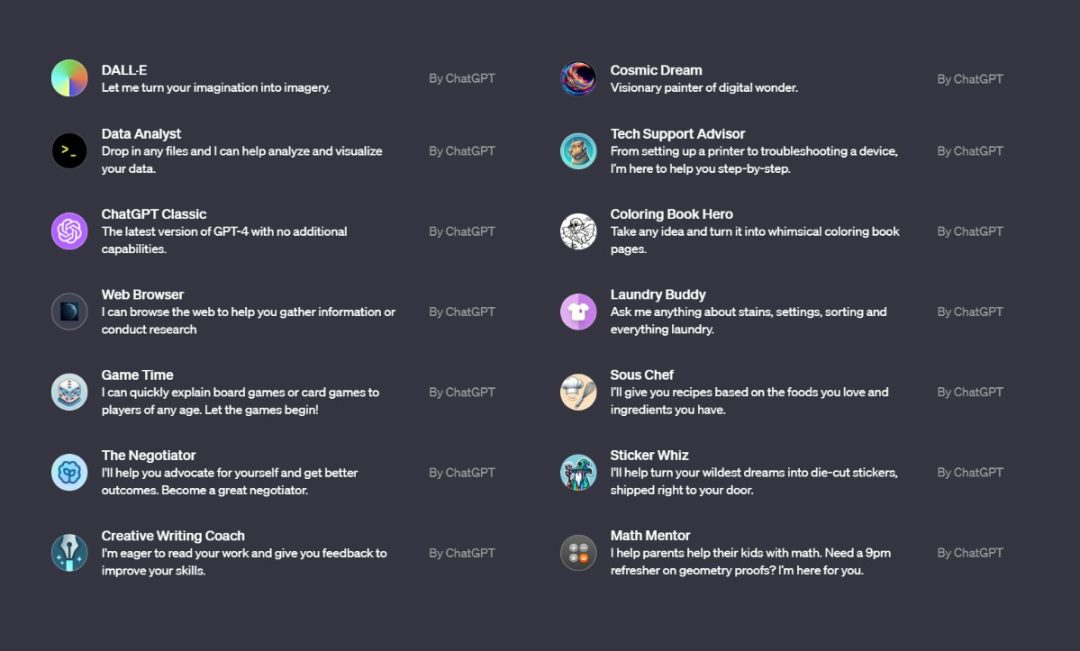

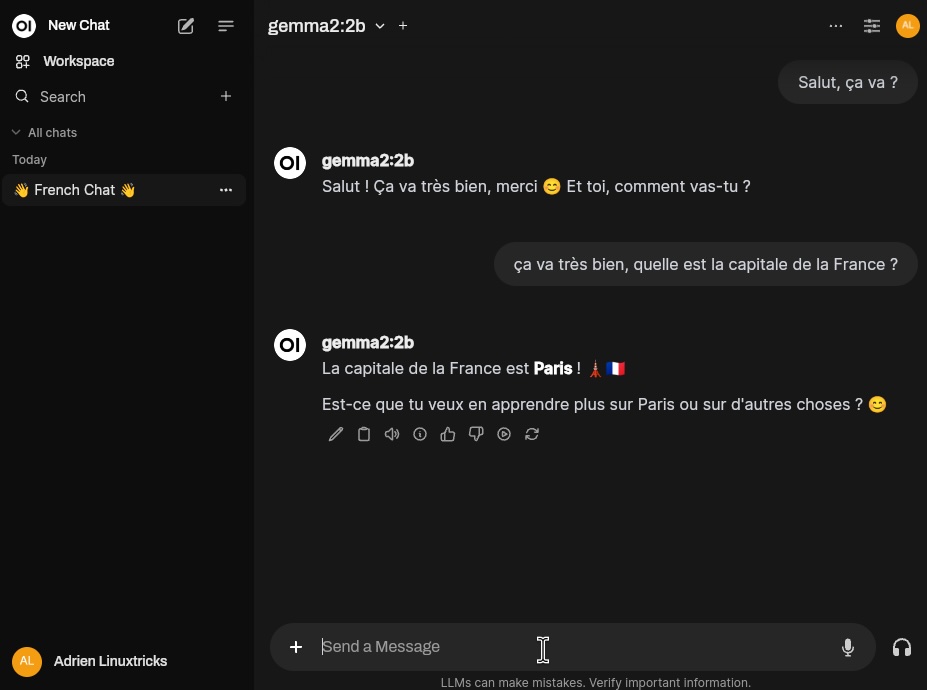

ChatGPT launched with an interface nearly identical to Google. This visual similarity captures an economic reality: both reduce transaction costs by connecting user intent to relevant capability.

The Core Matching Function

In platform economics, this is matching. Google matches queries to ranked web pages; FMs match prompts to generated computational responses. Both are matching architectures.

Second Observation: AI as a Bundled Platform

Bundling discrete services into a unified product.

The eCommerce Assembling Benchmark

Amazon bundles product catalogs, reviews, and logistics into one package. In platform economics, this is assembling. It eliminates the cost of using multiple separate tools.

AI's Bundling Function

FMs perform the same assembling function. Systems like ChatGPT bundle text, code, search, and images. Google bundles Gemini into Search and Workspace.

Third Observation: The Knowledge Logic of Social Media

Usage as a training signal.

Messaging & Social Media

In social media, every user action is harvested to build preference models. AI platforms do the same with Reinforcement Learning (RLHF), learning from every interaction.

The AI Data Flywheel

FMs are digital platforms that match intent to capability, assemble modular tools, and manage knowledge through data. We need a framework that unifies these three.

Six Strategic Conditions

Operationalizing the PEMAK tradeoffs for Foundation Model vendors.

MONOPOLY

MatchingINTEGRATED

MatchingWIDE

AssemblingPAID

AssemblingHIERARCHICAL

KnowledgeOPEN

KnowledgeWhich combinations of strategic conditions are sufficient for business model viability?

Why "Sufficient"?

Standard regression isolates the "average net effect" of a single independent variable. But in complex digital ecosystems, strategies are interdependent. We are not looking for one necessary master key, but rather the equifinal recipes (sufficient configurations) that lead to the same outcome (Ragin, 2008).

Why Standard Regression Fails Here

Four structural limitations when analyzing complex digital ecosystems.

Sample Too Small

N=49. Logistic regression requires N≥100 for stable estimates. With 49 cases across six parameters, regression models overfit severely, rendering standard errors unreliable.

Conditions Interact

OPEN=1 is a positive driver for Meta, but would destroy Anthropic's premium pricing model. Regression assumes independent, additive effects (fixed β), failing to capture these structural interdependencies.

Multiple Paths to Success

Open-weights, premium APIs, and platform bundlers achieve viability through entirely different structural mechanisms. Regression forces a single "best-fit" average path, violating equifinality.

Skewed Outcome

Only 5 non-viable cases out of 49. Regression-based models break down at this imbalanced ratio, where single outlier cases can swing the entire model's coefficients.

Qualitative Comparative Analysis (QCA)

Finding exact strategic recipes instead of average net effects.

1Regression Logic (Additive)

Evaluates the "average net effect" of each variable across all cases, assuming they operate independently. Masks specific strategic contexts.

2QCA Logic (Configurational)

Identifies the specific combinations of choices that produce the outcome. Directly maps equifinality (multiple valid paths).

Methodological Precedent

Chanson & Rocchi (2024): Applied csQCA to N=30 French neobanks. Proved that binary viability coding—grounded in pragmatic legitimacy and market recognition—produces valid configurational findings without requiring private accounting data.

Marx (2010): csQCA validity relies on avoiding random correlations via the Marx criterion (N ≥ 2^(k-1)). For k=6 conditions, minimum N=32. Our census of N=49 satisfies this rigorously.

Case Selection: Who Is in the Census and Why

Unit of Analysis: The Foundation Model Vendor (N=49 vendors). Evaluated against a multi-gateway presence methodology.

Multi-Gateway Presence Methodology

| Gateway Type | Gateways Evaluated (11 Total) |

|---|---|

| Cloud Providers | Alibaba Cloud, AWS Bedrock, Google Cloud Vertex AI, Microsoft Azure AI, Tencent Cloud |

| API Aggregators | CloudFlare AI Gateway, OpenRouter, Vercel AI Gateway |

| Community Hubs | Hugging Face Hub, Arena.ai, LiteLLM |

Data Sourcing & Traceability

Connecting strategic codings to verifiable evidence.

Collection Channels

Cloud (AWS Bedrock, Azure AI, Alibaba Cloud), Aggregators (OpenRouter, Vercel), and Hubs (Hugging Face, Arena.ai).

Model Cards, GitHub Licenses (Apache 2.0/MIT), Terms of Service, and Public ARR reports (e.g., OpenAI $11.6B).

Every exit (Character.ai, Adept AI) documented with ≥3 independent citations (TechCrunch, SEC filings, official announcements).

Coding Traceability Sample: Zhipu AI / GLM

| Condition | Val | Empirical Evidence & Source |

|---|---|---|

| WIDE | 1 | GLM-4 flagship covers text, code, vision, & tool use. (Source: bigmodel.cn) |

| HIER. | 1 | Full internal pipeline control; CAC compliance requirements. (Source: THUDM Repo) |

| OPEN | 1 | Weights released under Apache 2.0. (Source: huggingface.co/THUDM) |

| PAID | 0 | Free consumer app + free developer API tiers. (Source: BigModel.cn pricing) |

The Data Matrix:

Only 15 of 64 logically possible configurations are populated. The market exhibits high strategic coherence.

Abridged Binary Data Matrix

N=49 Vendors| Vendor | MONO | INT | WIDE | PAID | HIER | OPEN | Pathway |

|---|---|---|---|---|---|---|---|

| OpenAI | 1 | 1 | 1 | 1 | 1 | 0 | Proprietary |

| 1 | 1 | 1 | 0 | 1 | 0 | Proprietary | |

| Anthropic | 1 | 0 | 1 | 1 | 1 | 0 | Collective |

| Mistral AI | 0 | 0 | 1 | 0 | 1 | 1 | Collective |

| DeepSeek | 0 | 0 | 1 | 0 | 1 | 1 | Collective |

| Meta | 1 | 0 | 1 | 0 | 1 | 1 | Collective |

| Cohere | 0 | 0 | 1 | 1 | 1 | 0 | Collective |

| xAI | 1 | 1 | 1 | 0 | 1 | 0 | Proprietary |

| Apple | 1 | 1 | 1 | 0 | 1 | 0 | Y=0 Deviant |

Condition Frequencies

Coding Protocol: Binary Decision Rules

Explicit thresholds for the six PEMAK conditions to ensure replicability.

| Condition | Coded = 1 (Present) | Coded = 0 (Absent) |

|---|---|---|

| MONOPOLY | Explicit AGI goal OR >30% API market share OR parent platform dominance (>50%) w/ LLM bundling | Competitive positioning, niche focus, market share <30%, open-source commoditization |

| INTEGRATED | ≥3 cross-product integrations with shared context windows OR ecosystem lock-in | <3 integrations OR API-only distribution OR explicit strategic neutrality |

| WIDE | Single flagship model with native multimodal processing (text+code+vision+audio) in unified arch | Text-focused flagship with separate vision/audio models OR adapters only |

| PAID | Premium-only pricing with no substantive free tier for production use | Freemium model with significant free access OR ad-supported |

| HIERARCHICAL | Documented Constitutional AI framework OR external safety audits OR published governance | Standard RLHF without constitutional grounding OR no external audit |

| OPEN | Downloadable model weights publicly available under permissive license | API-only access OR proprietary weights OR restrictive licensing |

Boundary Case Example: Anthropic

Anthropic lacks proprietary data centers but has a massive AWS partnership. Crisp binary rule: INTEGRATED=0 (fewer than 3 cross-product integrations). fsQCA Triangulation: INTEGRATED=0.33. The same sufficient configurations emerge, proving stability.

Expert Interview Design: Leveraging FMTI Standards

Standardized questionnaire mapping Stanford CRFM indicators to PEMAK strategic conditions.

Expert Validation Framework (FMTI-Derived)

Our expert panel used a structured coding instrument modeled after the 100 indicators of the Foundation Model Transparency Index. This ensures that high-level strategy codings are grounded in verifiable technical and organizational disclosure.

| FMTI Domain | FMTI Indicator (Sample) | PEMAK Condition | Consensus Coding Logic |

|---|---|---|---|

| Upstream | Data Acquisition Methods | HIERARCHICAL | Verify if data curation is strictly internal (H=1) or relies on community sourcing (H=0). |

| Model | Capabilities & Modalities | WIDE | Confirm native text+vision+audio processing in a single flagship architecture (W=1). |

| Downstream | Distribution & License | OPEN | Binary check: Weights released under Apache 2.0/MIT/CC-BY (O=1) vs Proprietary (O=0). |

| Downstream | Usage Policies & Pricing | PAID | Analyze paywalls and data-usage opt-out terms. No substantive free tier required for P=1. |

| Organization | Ecosystem & Governance | MONO / INT | Assess adjacent bottleneck leverage (M=1) and cross-stack bundling depth (I=1). |

Calibration: Three-Source Triangulation & Expert Panel

Subjective strategic choices translated into binary logic via strict independent convergence.

Three-Source Triangulation Protocol

Each binary assignment requires majority agreement (≥2/3) across independent source strata. Disagreements trigger qualitative process tracing.

| Stratum | Description & Sources |

|---|---|

| 1: Expert Panel | Structured evaluation of primary evidence.Cross-disciplinary panel: VCs (MONOPOLY, PAID), ML engineers (INTEGRATED, WIDE, OPEN), Platform Academics (HIERARCHICAL). Independent coding prior to group discussion. |

| 2: Academic & Benchmarks | Systematic review of quantitative metrics.Stanford HAI Index, State of AI Report, LMSYS Chatbot Arena, EU AI Act filings. |

| 3: Public Discourse | Analysis of declared strategic intent.Executive statements, GitHub Issues, developer forums, investor relations. |

Inter-Rater Reliability

Calculated on a random 12-vendor subset. Pre-specified threshold: Cohen's κ ≥ 0.70.

| Condition | Cohen's κ | Kripp.'s α |

|---|---|---|

| MONOPOLY | 0.74 | 0.76 |

| INTEGRATED | 0.86 | 0.88 |

| WIDE | 0.79 | 0.82 |

| PAID | 0.93 | 0.95 |

| HIERARCHICAL | 0.81 | 0.85 |

| OPEN | 1.00 | 1.00 |

* MONOPOLY relies on interpretative market boundaries, leading to lower (but passing) κ. Process tracing resolves disagreements.

Methodological Robustness & Falsifiability

Five-stage QCA analysis protocol and pre-registered falsification criteria.

Five-Stage Analysis Protocol

Falsifiability Criteria

The methodology is considered falsified if any of these pre-registered conditions occur:

- Expert panel consistently achieves κ < 0.70.

- Single-condition analyses produce contradictory predictions across cases.

- Fuzzy-set triangulation (fsQCA) produces different archetype assignments than csQCA.

- Sensitivity analysis reveals systematic instability under ±5% threshold variation.

6 conditions requires N≥32. Our census of N=49 prevents random configurational correlations.

Foundation Model Transparency Index (FMTI)

External validation via Stanford CRFM's 100-indicator assessment (Dec 2025).

100 Transparency Indicators

Stanford evaluates vendors across 100 indicators in three domains. Average score in Dec 2025 dropped to 40/100, reflecting stricter disclosure standards.

Questionnaire Sample

- • Does the developer disclose the Top-5 data sources used?

- • Is the amount of compute (FLOPs) used for pre-training disclosed?

- • Are red teaming methodologies and findings published?

December 2025 Benchmarks

| Vendor | Score | Status |

|---|---|---|

| IBM (Granite) | 95/100 | Top Performer |

| Writer | 72/100 | Leader |

| AI21 Labs | 66/100 | Leader |

| OpenAI (GPT-4) | 48/100 | Average |

| DeepSeek | 32/100 | Developing |

| Alibaba (Qwen) | 26/100 | Developing |

| xAI / Midjourney | 14/100 | Low |

Citation: Wan et al. (2025). The 2025 Foundation Model Transparency Index. Stanford CRFM / arXiv:2512.10169.

Necessity Analysis — No Single Condition Is Required

Equifinality confirmed: multiple paths to viability, no universal key.

Consistency (Y=1)

| Condition | Cons. | 0.90 Threshold |

|---|---|---|

| MONOPOLY | 0.303 | FAIL |

| ~MONOPOLY | 0.697 | FAIL |

| INTEGRATED | 0.242 | FAIL |

| ~INTEGRATED | 0.758 | FAIL |

| WIDE | 0.576 | FAIL |

| PAID | 0.242 | FAIL |

| HIERARCHICAL | 0.848 | HIGHEST |

| OPEN | 0.394 | FAIL |

"No single condition is necessary. The market has no master key."

Equifinality is confirmed: Different combinations of structural choices produce the identical outcome of commercial viability.

Strategic Implication

This coexistence is not a transitional phase resulting from market immaturity. It is the stable structure of this market. Multiple completely distinct strategic recipes produce sustainable commercial viability.

The Result — Two Paths to Commercial Viability

~INTEGRATED + MONOPOLY · WIDE → Y=1. Overall consistency: 0.917.

Collective Path

n=34/44"You do not own the infrastructure — and that is enough."

Llama, Mistral, DeepSeek, z.ai — weights as public commons

Anthropic, Cohere, ArceeAI — active orchestration, closed weights

OpenRouter, Perplexity, Morph — benchmark legitimacy entry

Proprietary Path

n=13/44"Adjacent monopoly + broad capability = captive distribution."

Existing billion-user base (Search, Social, OS)

Google $237B, Microsoft $211B, Meta $134B fund R&D

Wide capability scope creates workflow lock-in

Deviant Cases — Five Y=0 Vendors Analyzed

All are event-specific or actor-specific. None are configuration-determined.

Character.ai

Google acqui-hire, Aug 2024 ($2.7B). API discontinued post-acquisition.

Event-specific exit.

Adept AI

Amazon acqui-hire, Jun 2024 ($430M). Team absorbed for AGI research.

Actor-level acquisition.

Apple

Apple Intelligence is iOS-only. No external API by strategic choice.

Platform gatekeeper choice.

Nvidia

Repositioned to AI infra (NIM platform). GPU business already dominates.

Strategic pivot to hardware advantage.

Aleph Alpha

Pivoted to sovereign AI consulting (PhariaAI) in 2024. Exited API race.

Strategic exit from frontier market.

All 5: contingent exits, not configuration failures.

The configurations themselves remain inhabited by viable vendors. The Death Zone is structurally determined — deviant cases are not.

Contributions — Theory, Method, and Policy

A framework that predicts both what exists and what cannot exist.

Complementor Engagement

Grounds FM viability in active 3rd-party adoption. The moment a tech project becomes a platform.

Iansiti & Levien (2004)Ecosystem Generativity

Open-weight release as structural insurance. 20/20 vendors viable — zero exceptions.

Zittrain (2006)Functional Equivalence

FM vendors ARE platforms if they match, assemble, and manage knowledge. PEMAK applies directly.

Brousseau & Pénard (2007)Equifinality as Equilibrium

Multiple paths to viability are NOT transitional. Capital constraints sustain the ecology.

Chanson & Rocchi (2024)PolicyConfiguration-Aware Regulation

Focus on bundling risks and adjacent monopoly cross-subsidies, not just capability benchmarks.

Track aggregate deployment patterns across the ecosystem, not just originating choices.

Integrated standalone vendors without monopoly = early-warning signal for acqui-hire risk.

A Coherent Structure

Beneath the Chaos

Open-weight release turns community into insurance. Zero-cost complementor distribution.

Trust premium + active orchestration. Cloud compute replaces sunk capital costs.

Adjacent monopoly cross-subsidy. Captive multi-million user distribution.

~MONOPOLY · INTEGRATED: impossible without cross-subsidy.

Ecosystem generativity structurally forecloses organizational exit.

"A framework that predicts only what exists is descriptive. A framework that predicts what cannot exist — and explains why — is causal."